AI API Pricing Explained for Startups

Most startups underestimate AI costs badly.

At first, AI APIs seem cheap:

fractions of a cent per request,

low monthly bills,

and easy integration.

Then growth happens.

Usage spikes.

Token consumption explodes.

Infrastructure costs multiply.

Suddenly, an AI feature that looked affordable becomes one of the largest operational expenses in the business.

That’s why understanding AI API pricing is critical for startups in 2026.

The companies succeeding with AI today are not just building smart products. They’re building:

sustainable pricing models,

efficient token systems,

and scalable AI economics.

This guide explains how AI API pricing really works, what drives costs, and how startups can avoid expensive mistakes while scaling AI-powered products.

What Is AI API Pricing?

AI API pricing refers to the cost businesses pay to access AI models through external platforms.

Instead of building AI systems from scratch, startups use APIs from providers offering:

language models,

image generation,

speech recognition,

embeddings,

and automation tools.

Pricing is usually based on:

tokens,

requests,

compute usage,

or generated outputs.

The challenge is that many founders don’t fully understand how these billing systems scale over time.

Why AI Pricing Confuses Startups

Traditional SaaS pricing is relatively predictable.

AI pricing is different because costs fluctuate dynamically based on:

user behavior,

prompt size,

response length,

model complexity,

and traffic volume.

This creates unpredictable infrastructure expenses.

The Hidden Scaling Problem

A startup might test an AI feature with:

100 users

successfully.

But at:

100,000 users,

the economics can change dramatically.

Many AI startups fail not because the product is bad, but because:

the unit economics stop making sense.

The T.O.K.E.N Framework for Understanding AI Costs

Most founders think AI pricing is only about “cost per request.”

That’s incomplete.

To understand AI economics properly, startups should use the T.O.K.E.N Framework.

T — Token Consumption

Most modern language models charge based on:

tokens processed.

Tokens include:

prompts,

instructions,

conversations,

and outputs.

Longer interactions increase costs rapidly.

Example

A chatbot handling:

short FAQ responses

costs far less than:long-form content generation.

This is why prompt efficiency matters enormously.

O — Output Complexity

More advanced outputs require more computational resources.

AI tasks like:

code generation,

reasoning,

and multi-step analysis

usually cost more than simple text completion.

The smarter the model:

the higher the pricing tier tends to be.

K — Knowledge Processing

Some AI systems charge additional fees for:

embeddings,

vector storage,

retrieval systems,

and memory layers.

Founders often overlook these backend expenses when forecasting budgets.

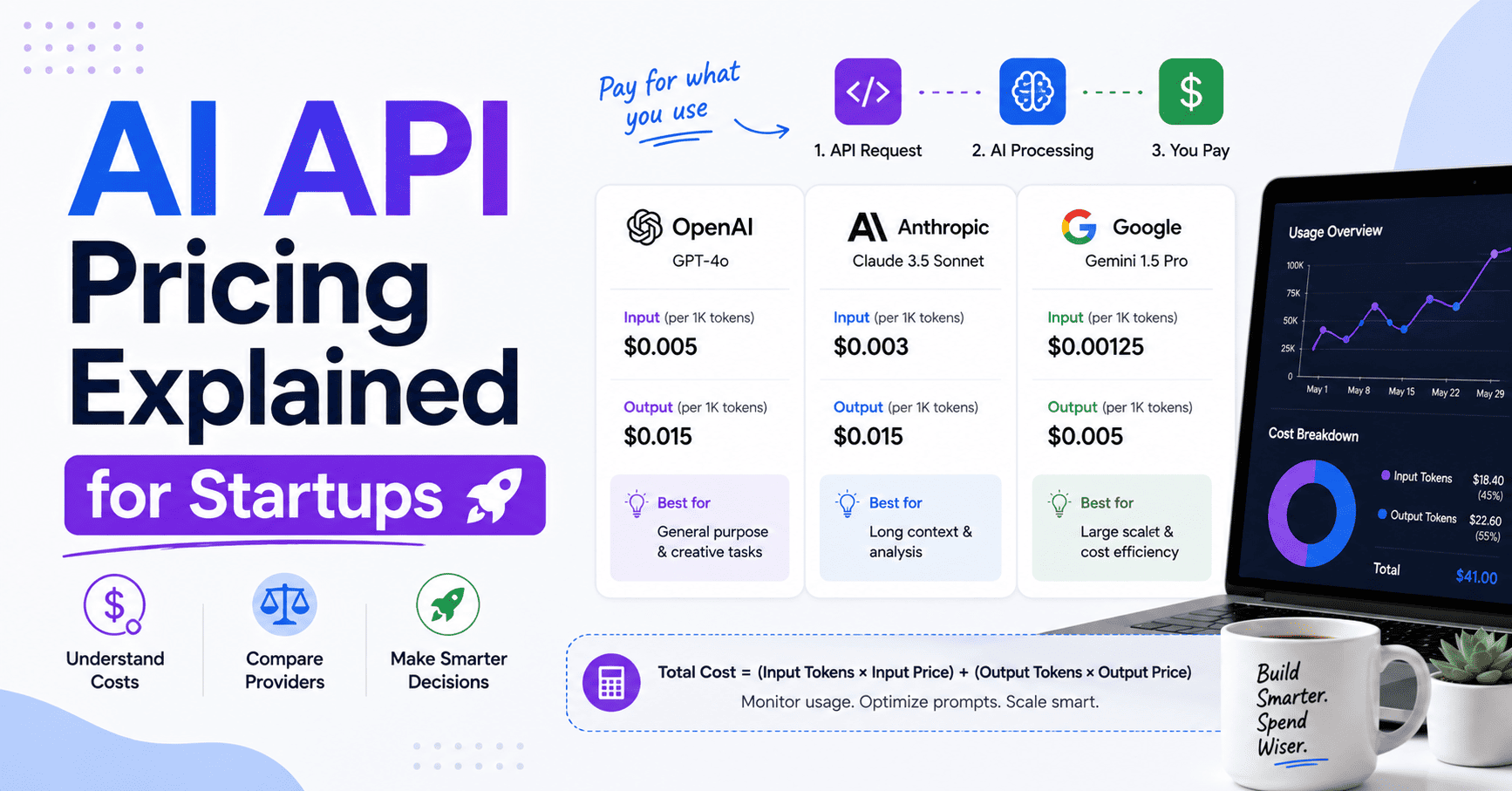

The Most Common AI Pricing Models

AI providers structure pricing differently depending on their infrastructure.

1. Token-Based Pricing

This is the most common model.

Businesses pay for:

input tokens,

output tokens,

or both.

Long conversations and large outputs increase costs.

2. Request-Based Pricing

Some APIs charge per:

image generated,

voice transcription,

or request processed.

This works well for predictable workloads.

3. Subscription Pricing

Some AI platforms now offer:

fixed monthly plans,

usage bundles,

or enterprise licensing.

This provides more predictable budgeting.

4. Compute-Based Pricing

Advanced AI infrastructure sometimes charges based on:

GPU usage,

processing power,

or execution time.

This is common in custom AI deployments.

Why Token Efficiency Matters More Than Most Founders Realize

Token waste quietly destroys AI profitability.

Many startups send:

bloated prompts,

repetitive context,

or unnecessary instructions.

That increases costs dramatically at scale.

Example

An inefficient prompt using:

4,000 tokens

might cost:4x more

than an optimized prompt delivering the same result with:1,000 tokens.

At startup scale, this difference becomes enormous.

The Biggest AI API Cost Drivers

Several hidden variables influence pricing heavily.

1. Model Selection

Premium models cost more but often deliver:

better reasoning,

higher accuracy,

and stronger outputs.

Cheaper models reduce costs but may require:

additional retries,

moderation,

or manual correction.

Startups must balance:

quality,

speed,

and profitability.

2. User Behavior

Heavy users create disproportionate infrastructure costs.

One power user can consume:

thousands of API requests daily.

Without usage limits, costs can spiral quickly.

3. Context Windows

Larger context windows increase token processing significantly.

Long AI conversations are expensive because:

previous messages remain part of the prompt context.

4. Real-Time Processing

Live AI systems require faster compute responses, which often increases infrastructure pricing.

How Smart Startups Control AI Costs

The best AI startups optimize economics aggressively from the beginning.

Strategy #1: Use Tiered AI Models

Not every task requires premium AI.

Many companies route:

simple tasks to cheaper models,

and complex tasks to advanced models.

This hybrid approach reduces expenses significantly.

Strategy #2: Limit Context Size

Reducing unnecessary prompt history lowers token costs immediately.

Smarter prompt engineering improves profitability.

Strategy #3: Implement Usage Caps

AI products without limits often become financially unstable.

Many startups now introduce:

usage quotas,

premium plans,

or feature gating.

Strategy #4: Cache Frequent Responses

Repeated AI outputs can often be stored instead of regenerated repeatedly.

This reduces API calls dramatically.

The Future of AI API Pricing

AI pricing is evolving rapidly.

Competition among providers is already driving:

lower inference costs,

faster models,

and more flexible pricing structures.

But at the same time:

user expectations are rising.

Customers increasingly expect:

faster responses,

multimodal AI,

real-time reasoning,

and personalized outputs.

This creates an interesting challenge:

AI becomes cheaper per request while total usage grows exponentially.

The startups that win will not necessarily use the cheapest models.

They’ll build:

the smartest economic systems.

Common AI Pricing Mistakes Startups Make

1. Ignoring Unit Economics

Many startups launch AI features without understanding long-term cost structures.

2. Using Premium Models Everywhere

Overusing expensive models destroys margins quickly.

3. Poor Prompt Optimization

Inefficient prompts increase token usage massively at scale.

4. Underpricing AI Features

Some startups attract users successfully but lose money on every interaction.

Growth without sustainable margins becomes dangerous fast.

Final Thoughts

AI APIs are transforming software development faster than most industries expected.

But AI pricing is not as simple as:

“pay per request.”

Successful startups understand:

token economics,

prompt efficiency,

infrastructure scaling,

and monetization strategy deeply.

Because in 2026, the biggest AI advantage is not just building intelligent products.

It’s building profitable ones.

And the startups that master AI API economics early will scale far more sustainably than competitors chasing growth without understanding costs.

FAQ: AI API Pricing for Startups

What is AI API pricing?

AI API pricing refers to the cost businesses pay to access AI services such as language models, image generation, or speech recognition.

Why do AI costs increase so quickly?

AI costs scale with usage, token consumption, output size, and model complexity.

What are tokens in AI pricing?

Tokens are pieces of text processed by AI systems, including prompts and responses.

How can startups reduce AI API costs?

Startups can reduce costs through prompt optimization, caching, usage limits, and hybrid model strategies.

Why are advanced AI models more expensive?

Advanced models require more computational resources and infrastructure power.

What is the biggest AI pricing mistake startups make?

Ignoring long-term unit economics while scaling usage too quickly.

Continue with Google

Continue with Google